This article introduces an idea I’ve been exploring while working with AI-assisted design and coding workflows.

As AI becomes faster and more capable at producing UI, some tension is starting to show up:

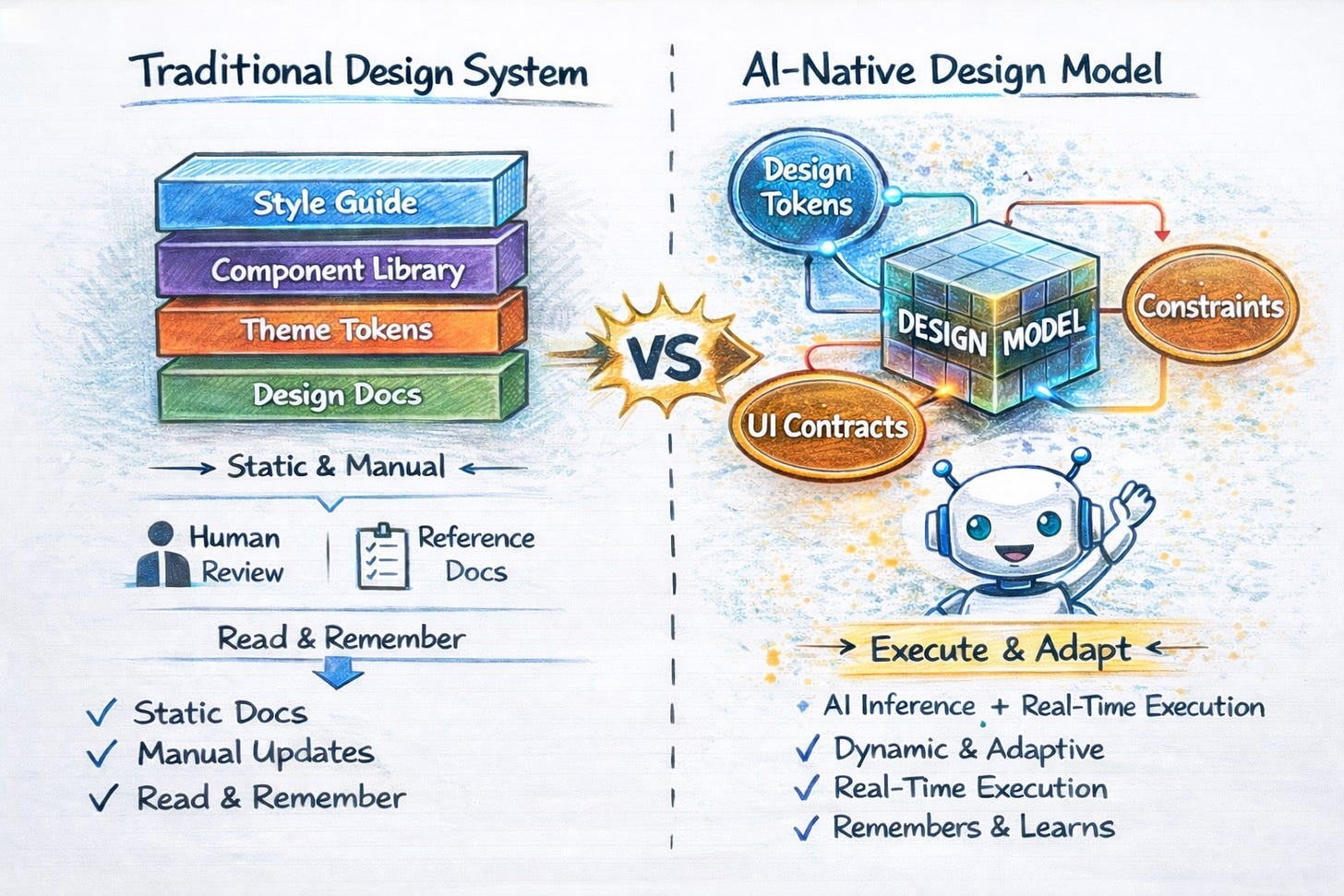

Our design systems were never built to operate at AI speed.

They were built to be read.

They assume humans will interpret rules, remember conventions, and catch mistakes in review.

AI doesn’t work that way.

This article proposes a shift from Design Systems to what I call a Design Model:

an executable form of design governance that AI tools, agents, and engineers can safely operate inside.

This is still experimental.

But it’s already working.

The problem isn’t generation anymore

AI can generate UI easily now.

Screens. Components. Layouts. Variants.

That part is no longer impressive.

The real problem is something else:

Who decides what is allowed to exist?

Which components are valid?

Which styles are acceptable?

Which combinations should never ship?

When AI can generate anything, what we often get isn’t better UX, it’s AI slop: inconsistent, off-brand, technically valid but unshippable UI.

Design systems were supposed to prevent this.

But they rely on documentation, memory, and human enforcement.

At AI speed, that breaks down.

Going one level up: the Design Model

Instead of asking how AI should generate UI, I started asking a different question:

What should AI be allowed to operate inside of?

That led me to the idea of a Design Model.

A Design Model is an evolution of a design system but expressed as something executable, not just readable.

It has three parts:

Tokens

Define what styles are allowed

(colors, spacing, typography, radius, etc.)Contracts

Define what components exist and what props are valid

(variants, sizes, defaults)Constraints

Define what decisions are not allowed

(for example: only one primary action per view)

Together, these don’t describe UI. They govern it.

A brief analogy (LLMs, but lightly)

If the analogy helps:

A Large Language Model is not a collection of sentences.

It’s a system that encodes patterns and rules well enough to produce the next meaningful output.

In a similar way, a Design Model is not a collection of components.

It encodes design decisions: tokens, contracts, constraints so valid UI can be resolved, not guessed.

The goal is not to copy how LLMs work.

It’s to apply the same shift: from static artifacts to an executable model.

Generated vs Resolved

In a Design Model, UI isn’t generated but instead it resolved.

An intent comes in from a developer, an AI agent, or a coding assistant.

That intent is evaluated against the model.

If it fits → it resolves cleanly

If it doesn’t → the system explains why and suggests adjustments

No static documentation.

No “please follow the design system 🙏”.

No late-stage reviews trying to catch violations. (My QA team can finally breathe)

Rules are enforced at runtime with guidance, not policing.

A deliberately small demo

To keep this honest, I built a small demo using just one component: a Button.

That’s intentional.

Buttons are:

universally understood

easy to misuse

visually obvious when wrong

a common source of inconsistency

If a system can’t govern a button, it won’t govern anything more complex.

In the demo:

You can render one or two primary buttons: it works

You can then add a constraint (“only one primary button per view”)

Run the same intent again

The outcome changes without changing the prompt or the renderer

The only thing that changed was the model.

📹 Watch my Demo video above.

Why this matters for AI-assisted coding

AI tools today can read design documentation (via MCP or similar approaches).

But documentation has no authority.

A Design Model does.

It sits between AI and code and answers one question consistently:

“Is this allowed to exist?”

AI can propose.

The model decides.

That shifts AI-assisted coding from:

“Generate UI and hope it’s right”

to:

“Resolve UI against rules and ship with confidence.”

This is still experimental

This is not:

a new product announcement

a framework

replacing designers or Figma

a completed standard

It’s a working exploration of an idea that feels increasingly necessary as AI accelerates how UI is built.

The repo is public. You can fork it, add a component, add a constraint, and try to break it.

Repo link: https://github.com/sherizan/design-model

An open invitation

If you’re researching AI and design systems,

or actively trying to adapt design systems for AI workflows,

I’d genuinely love to join forces and work toward a shared standard.

Design systems helped us scale consistency.

Design Models may be how we scale decision-making.

This is early.

But it feels like the right direction.